PyPy and RPython

DesertPy

22 October 2014

Tim Hochberg

This talk with involve a brief discussion of PyPy, a Python interpreter implemented in Python, followed by a longer discussion of the RPython toolchain which makes this implementation not only possible, but faster than the standard C-Python interpreter. The discussion of RPython will be illustrated using a simple interpreter implemented in Python, which is then translated to native code using the RPython toolchain.

Also, there will be Mandelbrot sets.

What is PyPy

- An implementation of Python written in Python because...

- Python allows easier experimentation with new features and approaches

- Stackless (microthreads)

- Sandboxing

- Software Transactional Memory

- ...

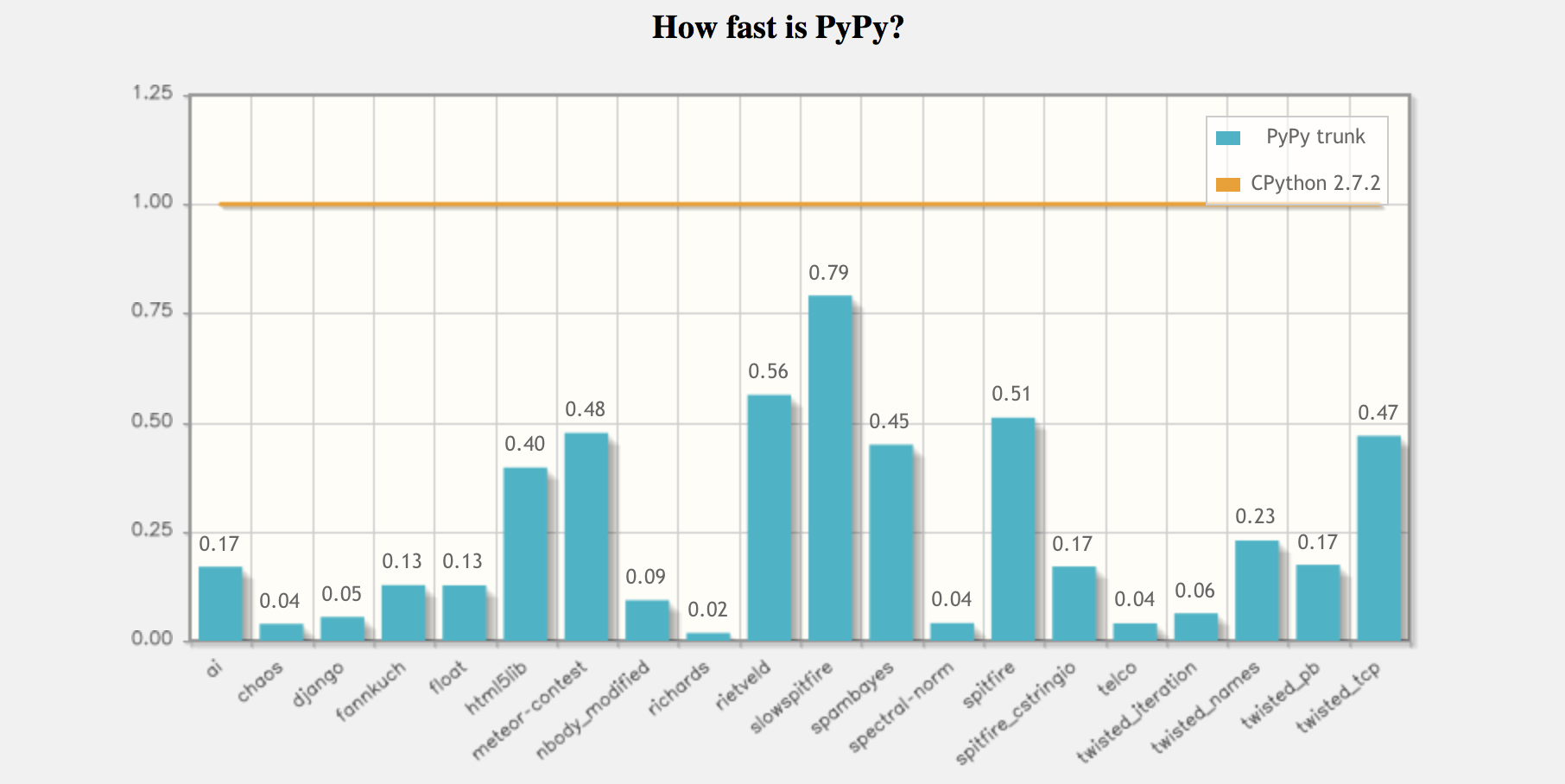

- Also, over 6× faster than CPython on average!

What's the Catch?

- PyPy relies on a JIT for speed; not effective for all code.

- The quoted speedups are based on benchmarks. YMMV.

- However, everything I timed was faster using PyPy.

- Not all modules based on C (or other languages) are working.

- There is a list of compatible modules at bitbucket.org/pypy/compatibility

- Notably, NumPy only partially working. Also, slow.

PyPy Example

# mandelbrot.py

def iterate_z(re0, im0, re, im, n):

for i in range(n):

# Z = Z**2 + Z0

temp = re*re - im*im + re0

im = 2*re*im + im0

re = temp

if hypot(re, im) > r_max:

return i

return n

def grey_value(re, im):

return int(scale * iterate_z(re, im, 0, 0, n))

def pixel(i, j):

return grey_value((x_offset + pixel_size * i), (y_offset + pixel_size * j))

def generate_pgm():

write("P2\n")

write("%s\n" % i_max)

write("%s\n" % j_max)

write("%s\n" % colour_max)

for j in range(j_max):

for i in range(i_max):

write("%s\n" % pixel(i,j))

# Setup code and boilerplate stuff

# ....

Timing

- Python 2.7.7: 15.4 seconds

- PyPy 2.3.1 (Python 2.7.6) : 3.0 seconds

5x Faster

- In line with benchmarks.

How is this possible?

PyPy (Python) ➡ [Magic Translator] ➡ PyPy (native code with JIT added automagically)

- If it's possible to translate Python to fast native code, why not always do it?

- PyPy is really written in RPython

- RPython is a subset of Python

- Hints are added to help the the translator

PyPy (RPython with hints) ➡ [RPython Toolchain] ➡ PyPy (native code with JIT)

RPython

- Whole programs; not modules

- Not fully documented

- The exact definition is “RPython is everything that our translation toolchain can accept”

- Many of the dynamic features of Python unavailable after module execution

def works():

a = "five"

b = "ten"

c1 = a + b

a = 5

b = 10

c2 = a + b

return c1, c2

def fails(a, b):

return a + b

works()

fails("five", "ten")

fails(5, 10)

<pre>

[translation:info] Error:

[...about 50 lines of traceback...]

[translation:ERROR] UnionError:

[translation:ERROR]

[translation:ERROR] Offending annotations:

[translation:ERROR] SomeString(const='five', no_nul=True)

[translation:ERROR] SomeInteger(const=5, knowntype=int, nonneg=True,unsigned=False)

[translation:ERROR]

[translation:ERROR]

[translation:ERROR] Occurred processing the following simple_call:

[translation:ERROR] (KeyError getting at the binding!)

[translation:ERROR] v0 = simple_call((function fails), (5), (10))

[translation:ERROR]

[translation:ERROR] In <FunctionGraph of (mandelbrot:91)main at 0x108091088>:

[translation:ERROR] Happened at file mandelbrot.py line 96

[translation:ERROR]

[translation:ERROR] c1, c2 = works()

[translation:ERROR] write(c1)

[translation:ERROR] write(str(c2))

[translation:ERROR] c1 = fails("five", "ten")

[translation:ERROR] ==> c2 = fails(5, 10)

[translation:ERROR] write(c1)

[translation:ERROR] write(str(c2))

[translation:ERROR] generate_pgm()

[translation:ERROR]

[translation:ERROR] Known variable annotations:

[translation:ERROR]

[translation:ERROR] Processing block:

[translation:ERROR] block@6 is a <class 'rpython.flowspace.flowcontext.EggBlock'>

[translation:ERROR] in (mandelbrot:91)main

[translation:ERROR] containing the following operations:

[translation:ERROR] v2 = getitem(v1, (0))

[translation:ERROR] v3 = getitem(v1, (1))

[translation:ERROR] v4 = simple_call((function write), v2)

[translation:ERROR] v5 = str(v3)

[translation:ERROR] v6 = simple_call((function write), v5)

[translation:ERROR] v7 = simple_call((function fails), ('five'), ('ten'))

[translation:ERROR] v0 = simple_call((function fails), (5), (10))

[translation:ERROR] v8 = simple_call((function write), v7)

[translation:ERROR] v9 = str(v0)

[translation:ERROR] v10 = simple_call((function write), v9)

[translation:ERROR] v11 = simple_call((function generate_pgm))

[translation:ERROR] --end--

</pre>

Interpreter Example

- First attempt was a basic lisp-like interpreter based on Peter Norvig's lis.py

- Only partially successful

- Second attempt operated on an assembly-like language.

- Uglier

- More successful as an experiment

(Ugly) Interpreter Example

- Portion of assembly language program for generating the Madelbrot set

Equivalent to earlier iterate_z function

label iterate_z # for k in range(n): set k 0 set re 0.0 set im 0.0 label k_loop # temp = re*re - im*im + re0 exec temp mul re re exec temp1 mul im im exec temp sub temp temp1 exec temp add temp re0 # im = 2*re*im + im0 exec im mul 2.0 im exec im mul im re exec im add im im0 # re = temp set re temp # if hypot(re, im) > r_max: exec mag hypot re im exec flag gt mag r_max branchif flag return_from_iterate # end of loop exec k add k 1 exec flag lt k n branchif flag k_loop # return n set k n jump return_from_iterate

Main Loop

def execute(program):

code, mem = compile(program)

pc = 0

try:

while pc < len(program):

jitdriver.jit_merge_point(pc=pc, mem=mem, code=code, program=program)

op = opcode(code, pc)

if op == C_SET:

a = opcode(code, pc+1)

b = opcode(code, pc+2)

mem[a] = mem[b]

pc += 3

elif op == C_EXEC_1:

symbol = opcode(code, pc+1)

op = opcode(code, pc+2)

a = mem[opcode(code, pc+3)]

try:

mem[symbol] = monops[op](a)

except KeyError:

raise ValueError("illegal unary op: %s" % op)

pc += 4

elif op == C_EXEC_2:

symbol = opcode(code, pc+1)

op = opcode(code, pc+2)

a = mem[opcode(code, pc+3)]

b = mem[opcode(code, pc+4)]

try:

mem[symbol] = binops[op](a, b)

except KeyError:

raise ValueError("illegal binary op: %s" % op)

pc += 5

elif op == C_BRANCHIF:

x = opcode(code, pc+1)

loc = opcode(code, pc+2)

if int_value(mem[x]):

pc = loc

else:

pc += 3

elif op == C_JUMP:

loc = opcode(code, pc+1)

pc = loc

elif op == C_DISPLAY:

arg = opcode(code, pc+1)

os.write(stdout_fd, '%s\n' % mem[arg].as_text())

pc += 2

elif op == C_END:

pc = len(code)

else:

raise ValueError("unknown command")

return 0

except:

os.write(stderr_fd, "ERROR at PC: %s\n" % pc)

return 1

How Does This Perform With Python?

$ time python machine.py mandelbrot.mach > img.pgm

jeopardy theme

real 91m46.877suser 26m4.570ssys 0m3.152s

Over 350 times worse than our original Python program!

How Does This Perform With PyPy?

$ time pypy machine.py mandelbrot.mach > img.pgm

real 2m33.314suser 2m29.942ssys 0m2.939s

Now only 10 times worse than our original program

Translating using RPython

$ pypy rpython machine.py

Forty seconds and many messages to stdout later we have an executable machine-c.

$ time ./machine-c mandelbrot.mach > img.pgm

real 0m6.137suser 0m4.528ssys 0m1.605s

Suddenly our simple interpreter is running faster than CPython!

Adding a JIT

This involves about 5 lines of hints and boilerplate and passing the --opt==jit option to RPython.

jitdriver = JitDriver(greens=['pc', 'code', 'program'], reds=['mem'])

def jitpolicy(driver):

from rpython.jit.codewriter.policy import JitPolicy

return JitPolicy()

# And, at the top of the event loop (we've seen this before)

jitdriver.jit_merge_point(pc=pc, mem=mem, code=code, program=program)

$ pypy rpython --opt=jit machine.py

Four minutes and many, many messages later we have a new machine-c executable.

JIT Improvement

$ time ./machine-c mandelbrot.mach > img.pgm

real 0m3.155suser 0m1.561ssys 0m1.589s

- Another factor of two improvement:

- almost as fast as

mandelbrot.pyrunning on PyPy - 5× faster than

mandelbrot.pyrunning on CPython - 160× faster than

mandelbrot.machrunning onmachine.pyrunning on PyPy - 1700× faster than

mandelbrot.machonmachine.pyrunning on CPython

Speed Summary

| mandelbrot.py | machine.py | skeem.py | |

|---|---|---|---|

| Python 2.7 | 15.4 | 5506 | 45095 |

| PyPy | 3.0 | 153 | 532 |

| RPython | 2.3 | 6.1 | 98 |

| RPython JIT | – | 3.2 | 504 |

Conclusion

- PyPy

- Fast

- Missing some modules

- RPython

- Harder than writing a interpreter in Python.

- Much easier than writing a fast interpreter in Python (or any other language that I know of).

- Might also be useful for writing some standalone applications.

Thanks

Tim Hochberg

tim@bitsofbits.com

Links

- http://pypy.org/ – main pypy site.

- http://speed.pypy.org/ – pypy benchmarks.

- http://rpython.readthedocs.org/ –

- http://morepypy.blogspot.com/2011/04/tutorial-writing-interpreter-with-pypy.html – writing a BF interpreter with PyPy

- http://bitly.com/bundles/cfbolz/1/ – series of posts on pypy based interpreters

- https://bitbucket.org/pypy/compatibility/wiki/Home – Wiki of known compatible and incompatible extension modules

- http://www.bitsofbits.com/2014/09/28/numpy-on-pypy-with-a-side-of-psymeric/ – Some of experiments by me with NumPy on PyPy

- http://www.bitsofbits.com/2014/10/22/a-partially-successful-adventure-in-rpython/ – A bit about skeem.py

- https://github.com/bitsofbits/rpython-experiments – Code for this talk